TL;DR

- Blob‑based design: Researchers propose moving execution‑payload data into blobs to reduce bandwidth strain and improve scalability across Ethereum validators.

- Data availability: The Block‑in‑Blobs model uses cryptographic commitments and sampling to ensure data exists without requiring full downloads.

- Ecosystem upgrades: The proposal aligns with broader work, including ERC‑8211’s programmable workflows and discussions around unifying data gas.

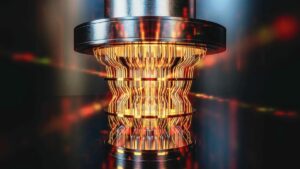

The latest research effort explores a design that relocates execution‑payload data into blobs published beside blocks on the Ethereum blockchain, a shift meant to ease bandwidth pressure and support broader scalability goals. The idea builds on earlier work and responds to rising data demands that have strained validators across the Ethereum network. By rethinking how core data is packaged and verified, researchers aim to streamline processing without compromising security.

Origins of the Block‑in‑Blobs Proposal

A recent post titled “Blocks Are Dead. Long Live Blobs,” co‑authored by Toni Wahrstatter and other contributors, outlines EIP‑8142, also known as Block‑in‑Blobs. The draft suggests encoding transaction data directly into blobs introduced through EIP‑4844, a milestone upgrade in Ethereum’s roadmap. Instead of downloading full execution payloads, validators would verify cryptographic commitments, reducing the need for heavy data replication across the network.

Addressing Bandwidth and Data Availability Challenges

The proposal targets a bottleneck created by expanding block sizes and higher gas limits, which force validators to handle increasingly large datasets. Blobs, added during the Dencun upgrade, already allow data to be committed efficiently without storing every detail onchain. EIP‑8142 extends this approach by embedding execution‑payload data into blobs, enabling validators to rely on sampling techniques that confirm data availability without full downloads across Ethereum nodes.

Implications for zkEVM and Validator Workflows

The shift becomes more relevant in a future shaped by zkEVM systems. Zero‑knowledge proofs can verify correct execution, but they do not ensure that the underlying data is accessible. Wahrstatter notes that validators verify proofs rather than transactions, creating a risk of withheld data. Block‑in‑Blobs aims to close this gap by making data availability explicit, allowing validators to sample blob data while preserving the integrity of Ethereum’s consensus model.

Toward Unified Data Costs and Smarter Transactions

Researchers also highlight potential changes to how the network accounts for data. Today, execution gas and blob usage remain separate, but a unified “data gas” model could align costs and reduce overlapping limits. Meanwhile, Biconomy and the Ethereum Foundation’s UX track are advancing ERC‑8211, a standard that turns transactions into programmable workflows. Together, these efforts reflect a broader wave of experimentation shaping Ethereum’s long‑term evolution.